If You're Abstracting Correctly, You Don't Feel Lost

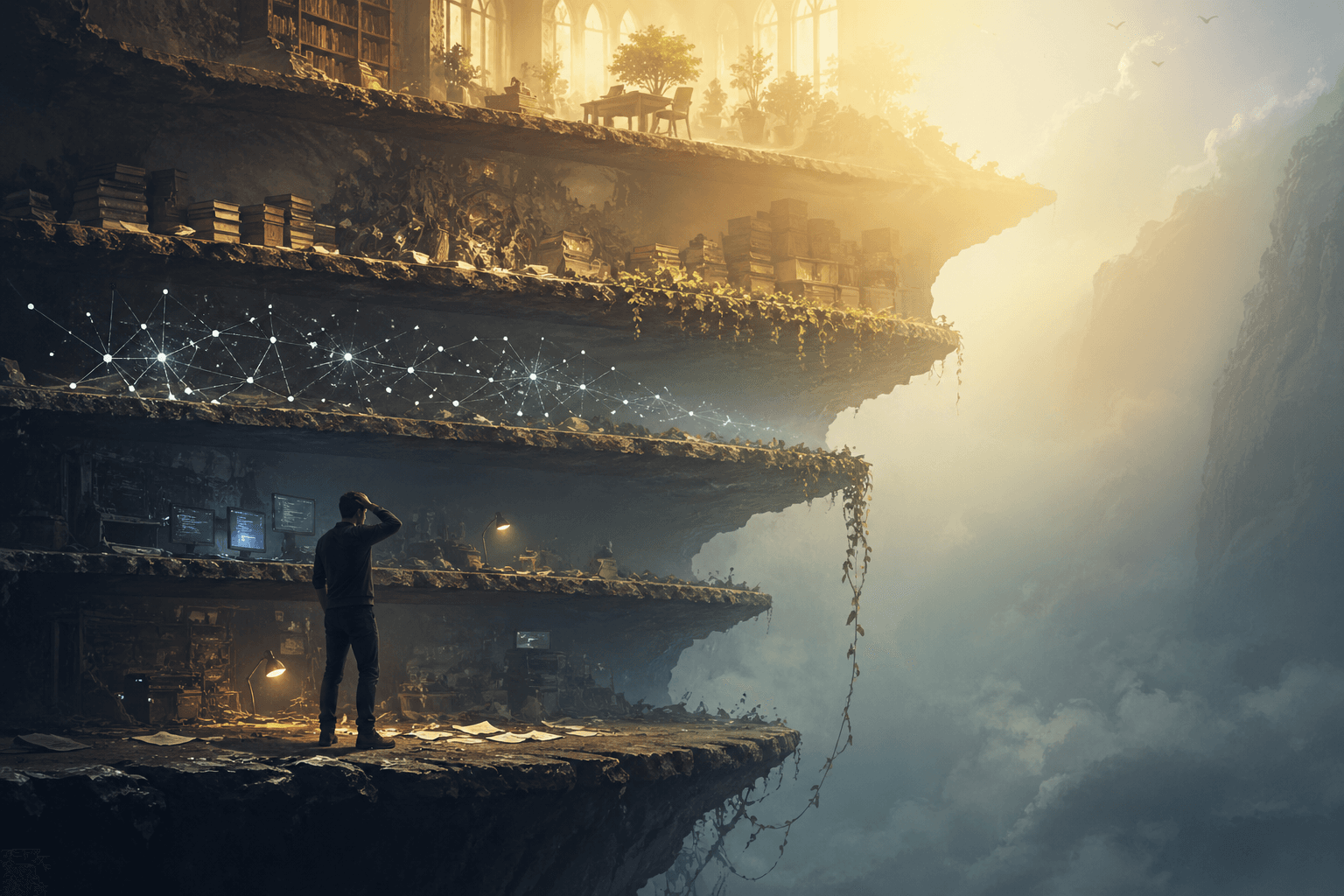

A senior engineer told me last month that he felt lost. Not in a small way. He could ship features. He could write code. He'd been doing it for fifteen years. But the abstraction had moved up a floor and he hadn't noticed in time.

He's not the only one. The disorientation is everywhere right now. And the most useful frame I have for it is borrowed: if you're abstracting correctly, you don't feel lost. If you do, you're on the wrong layer — or you're missing a layer you used to know cold.

The strange part is that the missing layer is one we already had. We just forgot it.

English is becoming a primitive

Andrej Karpathy has been pushing on a version of this for a couple of years. The line that keeps circulating — "the hottest new programming language is English" — sounds like a joke until you watch what happens when an experienced engineer takes it seriously.

In one of his recent vibe-coding sessions, Karpathy didn't write Rust. He wrote a markdown spec — in English, with structure, with tests-as-prose, with the constraints made explicit — and let the model produce the Rust. The spec was the source of truth. The Rust was a regenerable artifact downstream of it. When the spec changed, the Rust changed. When the Rust drifted, the spec was still right.

That is a real abstraction shift, and it's not abstract. It's already happening in production codebases. The artifact you used to call "code" is becoming a derived output. The artifact that matters — the thing that has to be right, structured, versioned, reviewed — is the spec. Which is to say: the language. Which is to say: the knowledge.

The lost feeling in the senior engineer was almost entirely this. He was still doing the work one layer below where the value had moved. He wasn't wrong. He was a step late.

Knowledge management is back from 1995

Here is the part most of the AI conversation is missing.

Anyone who used a computer in the DOS era — or hauled around a binder in a 1995 office — already knew this discipline. We had folders. We had naming conventions. We had hierarchies. We had a "shared drive" that was actually shared because someone curated it. Knowledge management was a job. Information architecture was a job. Library science was a job. We knew that the structure of what you stored mattered as much as what you stored.

Then the search era arrived and told us we could stop. Dump everything into the bucket. Google would find it. Dropbox would store it. The corpus didn't need shape because retrieval was cheap. For about a decade, we got away with it.

LLMs have ended that grace period. A model that reads from an unstructured pile produces unstructured output: confidently wrong on the version of a policy, citing a deprecated SOP, mixing two clients' data because the embeddings happened to be close. The retrieval problem that "search" papered over is now load-bearing again. And the discipline that solves it is older than any of the AI tooling we're arguing about.

It's folders. It's tags. It's links between documents. It's stewards who decide what's current and what's superseded. It's the wiki — which I've been calling context-as-net, and which is exactly what ContextNest implements. Wiki-style links between documents become a graph the model can walk. Tags become typed sets. Stewardship becomes load-bearing metadata. None of this is novel. It's pre-cloud knowledge management plus enough structure to be queryable by an agent.

If your AI initiative feels like it's spinning, the missing layer is almost always this one. Not the model. Not the agent framework. Not the tool stack. The structure of what your organization knows.

What "the right layer" looks like now

The practical stack for anyone shipping AI-aware systems has roughly six floors:

| Layer | What lives here |

|---|---|

| Intent / spec / English | The thing the system has to do, written down clearly enough that a model and a human can both read it. |

| Knowledge / context structure | Folders, tags, links, provenance, stewardship. The graph the model reads from. |

| Tools / protocols | MCP, function calls, retrieval. How the model reaches into systems. |

| Model behavior | Choice of model, prompt design, evaluation. |

| Code / runtime | The deterministic floor. The parts that must be exactly right. |

| Infra / silicon | Throughput, latency, cost. |

Two failure modes account for most of the lost feeling:

- Your layer collapsed. The thing you used to abstract over is leaking. The prompt that worked Tuesday fails Thursday. The retrieval that was fine in pilot returns the wrong policy in production. You can't trust the floor anymore.

- You're a layer too low. The work is real, but it's not where the value lives. You're rewriting retrieval code when the question is whether the corpus has structure. You're tuning prompts when the spec is ambiguous. You're picking models when the knowledge architecture isn't there.

Most of the lost senior people I see right now are in the second category. The fix isn't to grind harder at the layer they're on. It's to step up to the layer the value moved to — usually intent or knowledge structure — and start the work there.

Vibe coding is a layer choice

The argument about vibe coding misses this. It treats the question as "are you a real engineer" or "is the AI good enough yet." The real question is which layer the work belongs on.

For a prototype where the constraint is iteration speed, English is the right layer. You describe, the model generates, you revise. Dropping into the code to argue with semicolons is working below the abstraction for no reason.

For a payment processor where the constraint is correctness, English alone is the wrong layer. The floor of correctness is below where natural language can hold it. You drop down. You write tests. You read the diff.

For an enterprise AI initiative where the constraint is trust, the layer is neither English nor code. It's the knowledge layer underneath both — the structure of the corpus the model reads from. Get that right and the upper layers become mostly orchestration. Skip it and no amount of cleverness above will hold.

The skill isn't picking one. It's knowing which the current problem is.

The diagnostic

When you feel lost, run the check:

- What layer am I on? Name it. Vague answers are a tell.

- Is this where the value lives? Or have I defaulted to the layer I'm most comfortable with?

- Is the layer underneath holding? A leaking floor is a different problem than wrong layer.

- Have I checked the layer that we used to know — the one we forgot? Folders, files, links, hierarchies, stewardship. Knowledge management is back, and most teams haven't realized.

If the missing piece is at the structure layer, no amount of work at the model or prompt layer will fix it. You're trying to grow a forest on a tarp.

What hasn't changed

The lesson Karpathy keeps surfacing isn't about AI. It's about engineering judgment in a world where the stack is rebuilding itself in public. Pick the layer. Trust it until it leaks. When it leaks, drop down — but only as far as you have to. The layers haven't gone away. They've just rearranged. English is becoming load-bearing at the top. Knowledge architecture is becoming load-bearing in the middle. Code is consolidating into the parts that genuinely require precision.

If you're abstracting correctly, you don't feel lost. If you do, the move isn't to grind harder. It's to find the layer the work has moved to — often the one we forgot — and start there.

Get insights like this delivered

Join leaders navigating AI governance and agentic systems.